http://helix.sitecore.net/Now, once you read through this, I bet just like me you will be tempted to get a copy for yours. I use TDS for my everyday chores, so I got mine from here –

https://github.com/HedgehogDevelopment/Habitat/tree/TDS

Fun fact: Habitat team maintains two trees of the sample project for Habitat, one for TDS and the default one uses Unicorn.(https://github.com/Sitecore/Habitat)

I really wanted to play with this after reading through bits and pieces of the information and would like to share my experience of setting Habitat on my local with you all.

I am ready to get started with Habitat, are you ready?

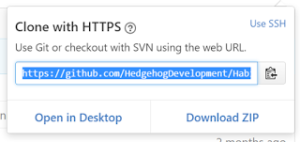

First things first, get the repo from the Githib for TDS Habitat. Please note, the clone URL setup on the github is not cloning the TDS version(may be not setup correctly, I will log a comment). So, for now, please click on Download Zip instead of cloning the repo to continue with local set up.

After this step, I got some serious code on my local. For the next step, I needed SIM which I already had on my machine, so went straight for it. I went ahead and installed a fresh Sitecore Instance 8.2, Update 1 per recommendation. Not sure if it is my internet connection, it did take some time. 🙂

Next step is to install webforms for marketers. You can get this as a package specific for the sitecore version and can install through the site once it is up. In my case here is the version needed for Sitecore 8.2, Update 1

Important point to note here – When you install WFFMModule from link above, do not think it is a sitecore package, unzip the folder and choose the one based on your server environment, mine is obviously local so I picked the basic package for install. After zipping pick the one highlighted below when installing from Sitecore Package Installer.

After this step, please rebuild the whole solution and ensure there are no errors. Then, right click on your solution and select deploy. Quick note on deploy, It takes a while as there are lot of solutions and lot of items to deploy, it did time out for me once, I did a redeploy and it worked.

Login to sitecore admin(admin/b) and Publish the site. Go to your local URL and ensure the site is up and running(screenshot below). Now, for the next steps, I will explore the solution and excited to see what Helix/Habitat could do for us. 🙂